Ecosystem Graphs: The Social Footprint of Foundation Models

Authors: Rishi Bommasani and Thomas Liao and Percy Liang

Website Paper Add assets GitHub

Foundation models (FMs) are the defining paradigm of modern AI. Beginning with language models like GPT-3, the paradigm extends to images, videos, code, proteins, and much more. Foundation models are both the frontier of AI research and the subject of global discourse (NYT, Nature, The Economist, CNN). To exemplify this, UK Prime Minister Rishi Sunak announced a FM taskforce as a top-level national priority. Given their remarkable capabilities, these models are widely deployed: Google and Microsoft are integrating FMs into flagship products like Microsoft Word and Google Slides, while OpenAI’s GPT-4 is powering products and services from Morgan Stanley, Khan Academy, Duolingo, and Stripe. FMs have seen unprecedented adoption — Reuters recently reported that ChatGPT, launched in November 2022, is the fastest-growing consumer application in history.

With FMs on the rise, especially in light of billions of dollars of funding to FM developers (Adept, Anthropic, Character, OpenAI, Perplexity), transparency is on the decline. OpenAI’s GPT-4 report reads: “Given both the competitive landscape and the safety implications of large-scale models like GPT-4, this report contains no further details about the architecture (including model size), hardware, training compute, dataset construction, training method, or similar.” At the Center for Research on Foundation Models, we are committed to ensuring foundation models are transparent. To date, we have built HELM to standardize their evaluation, argued for community norms to govern their release, and advocated for policy to expand these efforts.

To clarify the societal impact of foundation models, we are releasing Ecosystem Graphs as a centralized knowledge graph for documenting the foundation model ecosystem. Ecosystem Graphs consolidates distributed knowledge to improve the ecosystem’s transparency. Ecosystem Graphs helps us answer many questions about the foundation model ecosystem:

- What are the newest datasets, models, and products?

- Which modalities are trending? How are models scaling over time?

- If an influential asset like the OpenAI API goes down, what products would be affected?

- What video FMs are permissively-licensed so I can build an app using them?

- If I find a bug with an FM-based product, where can I report my feedback?

- Which organizations have the most impactful assets? How do FMs mediate relationships between institutions? Who has power in the ecosystem?

Ecosystem Graphs currently documents the ecosystem of 200+ assets, including datasets (e.g. The Pile, LAION-5B), models (e.g. BLOOM, Make-a-Video), and applications (e.g. GitHub Copilot X, Notion AI), spanning 60+ organizations and 9 modalities (e.g. music, genome sequences). Ecosystem Graphs will be a community-maintained resource (akin to Wikipedia) that we encourage everyone to contribute to! We envision Ecosystem Graphs will provide value to stakeholders spanning AI researchers, industry professionals, social scientists, auditors and policymakers.

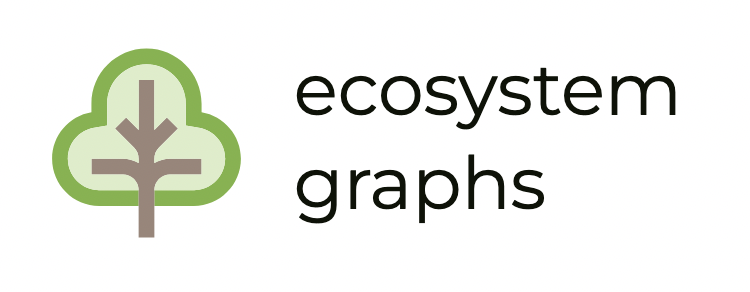

The Ecosystem

Like all technologies, foundation models are situated in a broader social context. In Figure 1, we depict the canonical pipeline for such development, whereby datasets, models, and applications mediate the relationship between the people on either side.

Concretely, consider Stable Diffusion:

- People create content (e.g. take photos) that they (or others) upload to the web.

- LAION curates the LAION-5B dataset from the CommonCrawl web scrape filtered for problematic content.

- LMU Munich, IWR Heidelberg University, and RunwayML train Stable Diffusion on a filtered version of LAION-5B using 256 A100 GPUs.

- Stability AI builds Stable Diffusion Reimagine by replacing the Stable Diffusion text encoder with an image encoder.

- Stability AI deploys Stable Diffusion Reimagine as an image editing tool to end users on Clipdrop, allowing users to generate multiple variations of a single image (e.g. imagine a room with different furniture layouts). The application includes a filter to block inappropriate requests and solicits user feedback to improve the system as well as mitigate bias.

While framed technically (e.g. curation, training, adaptation), the process bears broader societal ramifications: for example, ongoing litigation contends the use of LAION-5B and the subsequent image generation from StableDiffusion is unlawful, infringing on the rights and the styles of data creators.

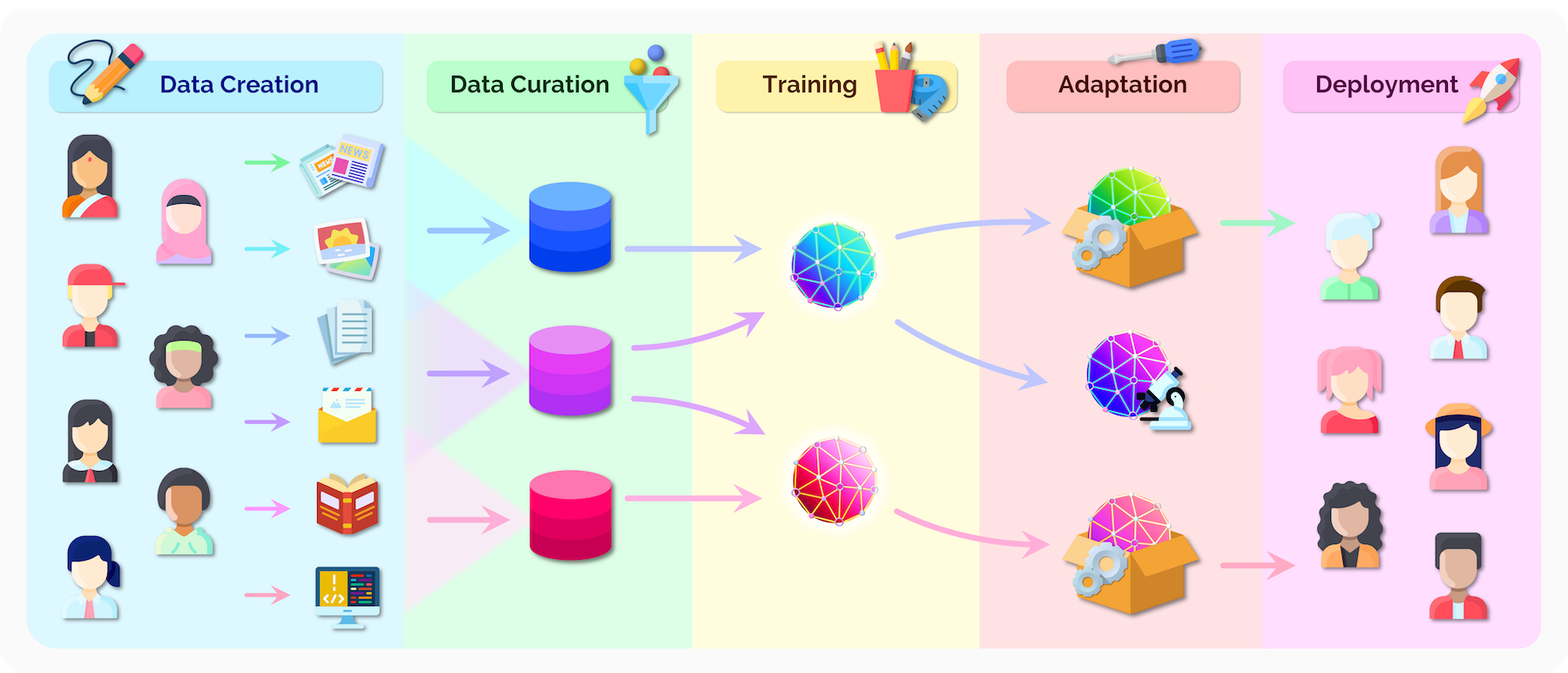

Framework

The ecosystem graph is defined in terms of assets: each asset has a type (one of dataset, model, application). Examples include The Pile dataset, the Stable Diffusion model, and the Bing Search application. To define the graph structure, each asset X has a set of dependencies, which are the assets required to build X. For example, LAION-5B is a dependency for Stable Diffusion and Stable Diffusion is a dependency for Stable Diffusion Reimagine. To supplement the graph structure, we annotate metadata (e.g. who built the asset) for every asset in its ecosystem card. In Figure 2, we give several examples of primitive structures (i.e. subgraphs) that we observe in the full ecosystem graph:

- The standard pipeline: The Jurassic-1 dataset is used to train Jurassic-1, which is used in the AI21 Playground.

- A common adaptation process: BLOOM is adapted by fine-tuning on xP3 to produce the instruction-tuned BLOOMZ model.

- Layering of applications: The GPT-4 API powers Microsoft 365 Copilot, which is integrated into Microsoft Word.

- Dependence on applications: While applications often are the end of pipelines (i.e. sinks in the graph), applications like Google Search both depend on FMs like MUM and support FMs (e. through knowledge retrieval) like Sparrow.

Value

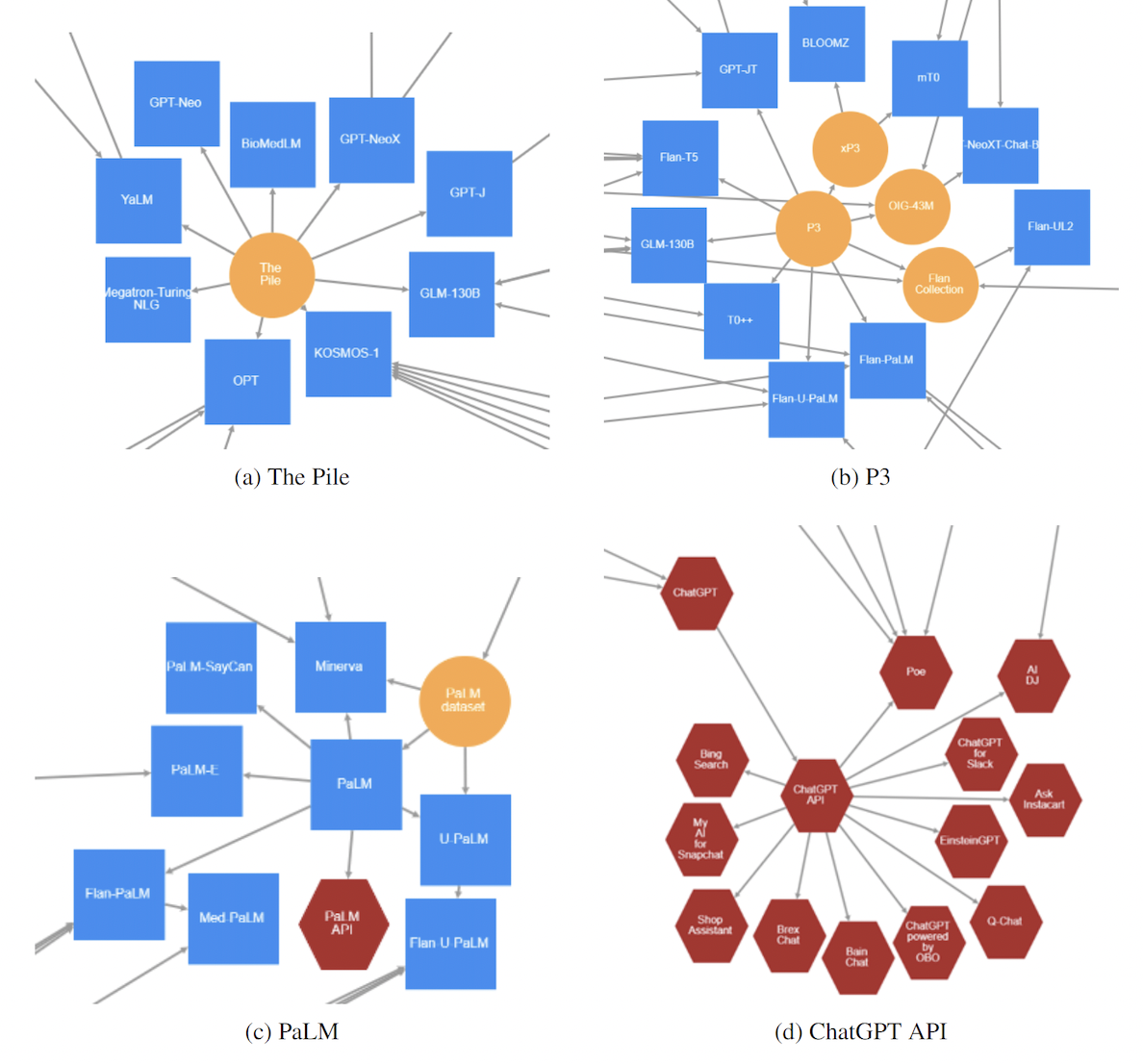

Ecosystem Graphs will provide value to a range of stakeholders: developers, AI researchers, social scientists, investors, auditors, policymakers, end users, and more. To demonstrate this value, we zoom into the hubs in the ecosystem: hubs are nodes with unusually high connectivity, featuring prominently in the analysis of graphs and networks. Figure 3 identifies four such hubs.

- The Pile is an essential resource for training foundation models from a range of institutions (EleutherAI, Meta, Microsoft, Stanford, Tsinghua, Yandex).

- P3 is of growing importance as interest in instruction-tuning grows, both directly used to train models and as a component in other instruction-tuning datasets.

- PaLM features centrally in Google’s internal foundation models for vision (PALM-E), robotics (PaLM-SayCan), text (Flan-U-PaLM), reasoning (Minerva), and medicine (Med-PaLM), making the recent announcement of the PaLM API for external use especially relevant.

- The ChatGPT API profoundly accelerates deployment with downstream products spanning a range of industry sectors.

For asset developers, hubs indicate their assets are high impact. For economists, hubs communicate emergent market structure and potential consolidation of power. For investors, hubs signal opportunities to further support or acquire. For policymakers and auditors, hubs identify targets to scrutinize to ensure their security and safety. Beyond hubs, we present more extensive arguments for why Ecosystem Graphs is useful in the paper.

Conclusion

The footprint of foundation models is rapidly expanding: this class of emerging technology is pervading society and is only in its infancy. Ecosystem Graphs aims to ensure that foundation models are transparent, establishing the basic facts of how they impact society. Concurrently, we encourage policy uptake to standardize reporting on all assets in Ecosystem Graphs: we will release a brief on Ecosystem Graphs as part of our series on foundation models.

Where to go from here:

- Website: Explore the ecosystem and hone in on specific datasets, models, and applications.

- Paper: Read more about the framework, design challenges, and value proposition.

- Add assets: Anyone can contribute new assets, independent of technical expertise!

- GitHub: Help build and maintain the ecosystem; we will be adding mechanisms soon to acknowledge significant open-source contributions!

Acknowledgements

Ecosystem Graphs was developed with Dilara Soylu (Stanford University) and Katie Creel (Northeastern University). Our design and implementation were informed by valuable feedback from folks at a range of organizations: we would like to thank Alex Tamkin, Ali Alvi, Amelia Hardy, Ansh Khurana, Ashwin Paranjape, Ayush Kanodia, Chris Manning, Dan Ho, Dan Jurafsky, Daniel Zhang, David Hall, Dean Carignan, Deep Ganguli, Dimitris Tsipras, Erik Brynjolfsson, Iason Gabriel, Irwing Kwong, Jack Clark, Jeremy Kaufmann, Laura Weidinger, Maggie Basta, Michael Zeng, Nazneen Rajani, Rob Reich, Rohan Taori, Tianyi Zhang, Vanessa Parli, Xia Song, Yann Dubois, and Yifan Mai for valuable feedback on this effort at various stages. We specifically thank Ashwin Ramaswami for extensive guidance on prior work in the open-source ecosystem and future directions for the foundation model ecosystem.